With information sprawling like a digital labyrinth on the internet, the role of web crawlers becomes crucial for seamless navigation. But what exactly are web crawlers, and how have they evolved into the sophisticated entities we now call AI website crawlers?

How about we unravel the mysteries behind these digital wanderers and explore the intricate workings that power the heart of digital discovery. Read on to find the: Top 10 Ways AI Website Crawlers are Helpful to Digital Discovery down below.

Table of Contents

- What are Website Crawlers?

- How Do the Web Crawlers Work? — From Indexing to Automation

- Overview of How a Website Crawler Works

- What is the Frequency of Web Crawling?

- Which Webcrawler is the Best: In-House or Outsourced?

- What are AI Website Crawlers? — The Rise of Digital Explorers

- How are AI Website Crawlers Beneficial to Digital Discovery — Strict Questions, Answered!

- AI Website Crawlers Are Changing the Web Crawling Game… Big Time!

What are Website Crawlers?

At its core, a web crawler, also known as a web spyder or robot, is a program designed to systematically browse the internet, indexing content for search engines or retrieving information from web pages. Think of them as the diligent librarians of the internet, tirelessly cataloging the ever-expanding digital tapestry.

How Do the Web Crawlers Work? — From Indexing to Automation

Web crawlers originated with the primary goal of indexing content for search engines. As the internet burgeoned, the need for automated processes to retrieve information became apparent. Enter web scraping, where companies started using web crawlers to extract structured data from web pages, giving birth to a new era of digital exploration.

Overview of How a Website Crawler Works

The journey of a web crawler begins with downloading the website’s robots.txt file, a set of instructions that guides automated crawlers on which pages are open for exploration. Once initiated, crawlers navigate through hyperlinks, discovering new pages and adding them to the crawl queue.

This intricate process ensures that every interconnected page gets its moment in the digital spotlight.

What is the Frequency of Web Crawling?

In a world where web pages get updated and deleted regularly, the frequency of crawling becomes a critical consideration.

Factors like content update frequency and links dictate the optimal crawl rate. Search engine crawlers employ sophisticated algorithms to determine how often a page should be re-crawled, striking a delicate balance between timeliness and efficiency.

Which Webcrawler is the Best: In-House or Outsourced?

To embark on a web crawling expedition, companies face the choice between building an in-house crawler or opting for pre-built, outsourced solutions.

Building in-house allows customization based on specific needs, but it demands development and maintenance efforts. On the flip side, pre-built crawlers offer simplicity but may lack the flexibility required for tailored exploration.

What are AI Website Crawlers? — The Rise of Digital Explorers

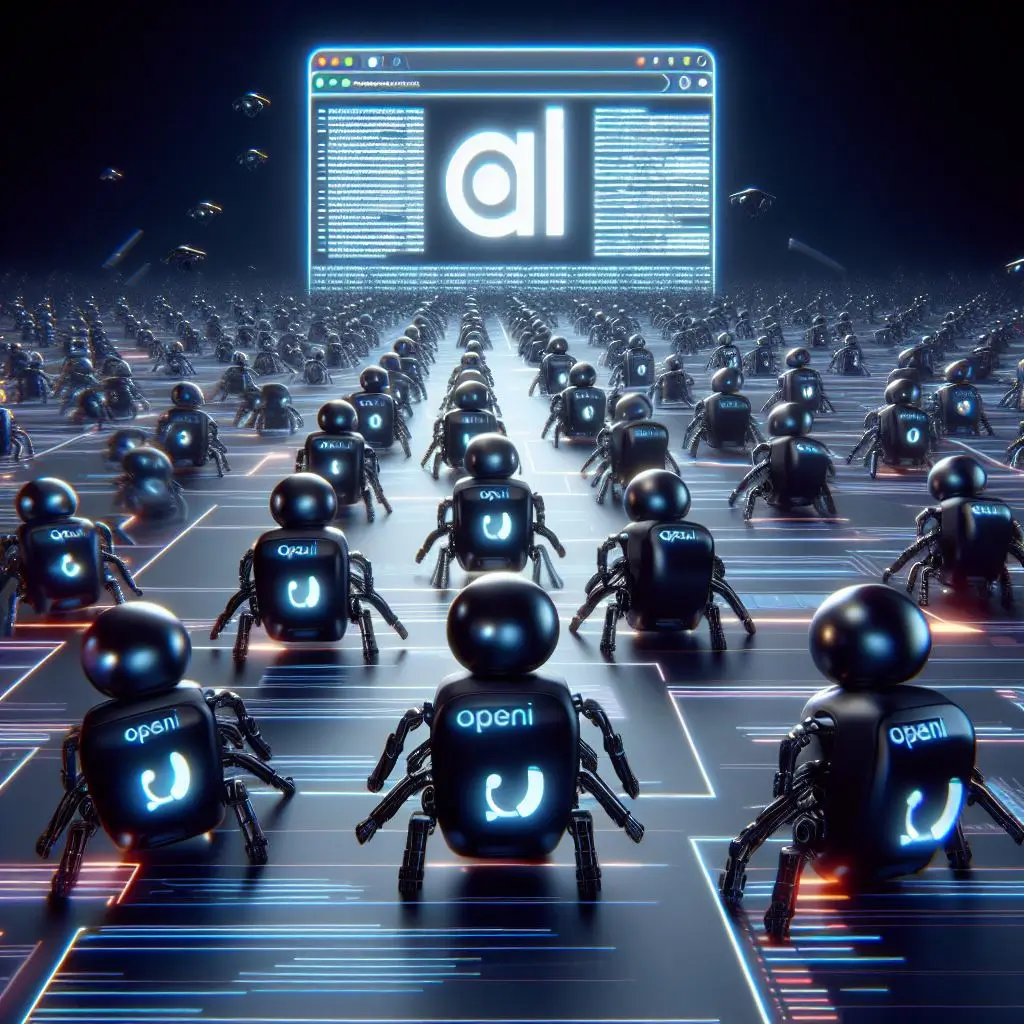

As the era of AI dawned, web crawlers evolved into intelligent entities known as AI website crawlers. OpenAI’s GPTBot, a notable player in this arena, introduced a new dimension by using AI to gather data for model improvement.

However, this digital evolution didn’t go unnoticed, leading to major websites erecting virtual barricades against these AI-powered wanderers.

How are AI Website Crawlers Beneficial to Digital Discovery — Strict Questions, Answered!

AI website crawlers are all over the internet… yes, really! (pun intended). But we are interested in what benefit they are of, right?

1. What advantages do AI-powered website crawlers offer over traditional methods?

AI-powered crawlers can analyze and understand website content more intelligently, leading to more accurate indexing and faster discovery of relevant information. They can also adapt to changes in website structures more effectively

2. Are there any limitations or challenges associated with using AI in website crawling?

While AI-powered crawlers offer many benefits, they may face challenges in handling complex website structures or accurately interpreting certain types of content, such as multimedia or dynamically generated pages.

3. How do AI website crawlers ensure compliance with website owners’ rules and regulations, such as robots.txt?

AI crawlers are typically designed to respect robots.txt directives and other rules set by website owners. They can be configured to adhere to specific crawl rate limits and exclusion protocols to ensure compliance with legal and ethical guidelines.

4. Can AI-powered crawlers differentiate between valuable content and spam or irrelevant information?

Yes, AI algorithms can be trained to distinguish between valuable content and spam or low-quality information. They use various techniques such as natural language processing, machine learning, and semantic analysis to assess content quality and relevance.

5. Do AI website crawlers pose any privacy concerns for users?

Privacy concerns may arise if AI crawlers collect sensitive or personally identifiable information from websites without consent. It’s important for developers to implement measures such as anonymization and data minimization to mitigate these risks and ensure compliance with privacy regulations.

6. How do AI website crawlers handle dynamic and JavaScript-rendered content?

AI crawlers are equipped with technologies such as headless browsers and dynamic rendering capabilities to navigate and index websites that rely heavily on JavaScript or dynamically generated content. This enables them to access and analyze content that traditional crawlers may struggle to interpret.

7. What role do AI website crawlers play in enhancing search engine optimization (SEO) efforts?

AI-powered crawlers can provide valuable insights into website performance, keyword usage, and content quality, helping website owners optimize their pages for better visibility in search engine results. They can identify areas for improvement and recommend strategies to boost search rankings.

8. Are there any notable examples of companies or projects using AI website crawlers effectively?

Several companies and research projects leverage AI-powered crawlers for various purposes, such as web indexing, content monitoring, and competitive analysis. Examples include companies specializing in SEO analytics, market research firms, and academic institutions conducting web data mining studies.

9. What measures are in place to prevent AI website crawlers from overloading servers or causing performance issues for websites?

AI crawlers typically implement mechanisms to control crawl rates and prioritize requests to avoid overwhelming servers or causing disruptions to website performance. They may also incorporate features like caching and parallel processing to optimize resource usage and minimize impact on server infrastructure.

10. How to block AI website crawlers from crawling your website?

There are several plugins in place for that, or you can also use a custom CSS code for preventing that. You can visit the blog here for detailed guide on how to do that: How to Block OpenAI AI Website Crawler?

AI Website Crawlers Are Changing the Web Crawling Game… Big Time!

In the grand narrative of the internet’s evolution, web crawlers, and their AI-infused counterparts, emerge as pioneers of digital discovery.

As we demystify their workings and explore the nuanced dance between innovation and ethical considerations, one thing becomes clear – these digital explorers are not just indexing content; they’re shaping the very landscape of our digital existence.

The journey continues, and with each crawl, the story unfolds. Just don’t forget to stay tuned to Tech Trend Tomorrow, okay?